There’s a question that comes up in almost every AI automation project I work on: how much should the agent just do, versus when should it stop and check with a human?

Get it wrong in one direction and you have a glorified chatbot that can’t do anything without approval. Get it wrong in the other and you have an agent that confidently sends the wrong email to 3,000 customers.

I’ve been developing a mental model for this that I call the reversibility × stakes matrix.

The Matrix

Picture two axes:

- Reversibility: Can this action be undone? Sending a draft email to yourself → easily reversible. Publishing a press release → not so much.

- Stakes: What’s the cost of getting it wrong? Tagging a task in the wrong project → low. Canceling a subscription → high.

These two dimensions give you four quadrants:

| Low Stakes | High Stakes | |

|---|---|---|

| Reversible | Let the agent go | Agent goes, but logs everything |

| Irreversible | Agent proposes, human approves | Human must approve, always |

Most of the interesting (and dangerous) territory lives in the bottom row.

Why “Just Add a Confirmation Step” Isn’t Enough

The naive solution is to add a human-in-the-loop checkpoint before every irreversible action. But this defeats the purpose of automation if those checkpoints happen too often, and it creates a false sense of security — humans rubber-stamp approvals when they get too many of them.

The better approach is to design agents that:

-

Batch decisions — instead of asking for approval 20 times, gather everything that needs human review and present it once, clearly.

-

Provide evidence — don’t just say “I want to delete this contact.” Show the data that led to that conclusion. A good agent creates a paper trail.

-

Default to the safer action — when uncertain, do less. Archive instead of delete. Draft instead of send. Flag instead of remove.

-

Make it easy to course-correct — if an agent does take an action that turns out to be wrong, recovery should be one click, not a support ticket.

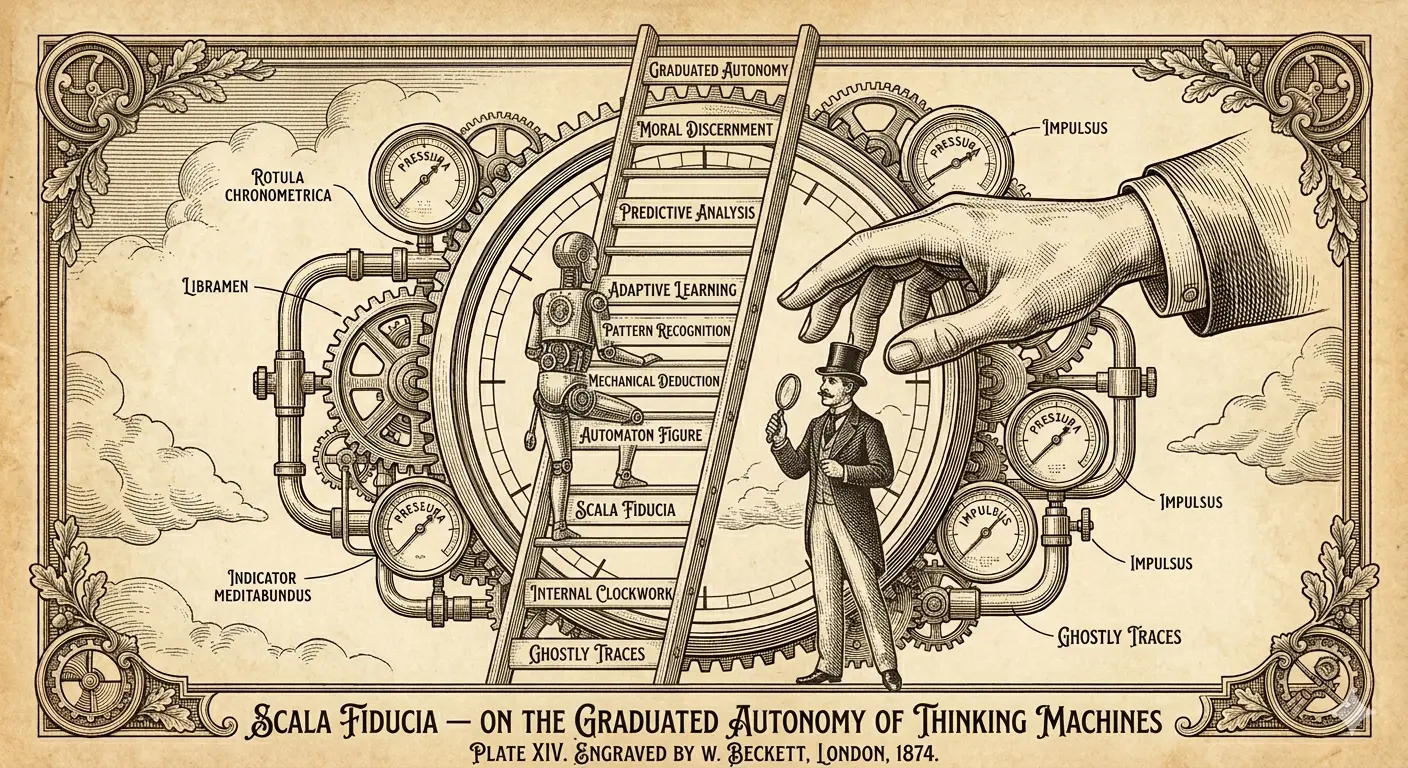

The Trust Ladder

I think about building agent autonomy like building trust with a new hire. You don’t give them the keys to everything on day one. You watch how they handle the small stuff. You expand their autonomy as they demonstrate good judgment.

With agents, this means:

- Start with shadow mode (agent recommends, human does)

- Move to supervised automation (agent does, human reviews before it takes effect)

- Graduate to autonomous operation for proven task types only

The goal isn’t maximum autonomy. The goal is the right level of autonomy for each specific task, earned through demonstrated reliability.